From 3D Render to "Photorealism" with help from ChatGPT

/I’ve been working in 3D for over twenty years.

I started long before things were fast or automated. Back then, if you wanted something to exist, you built it. Piece by piece. Vertex by vertex. I spent years working in tools like 3D Studio Max, Blender, and Maya, modeling everything from small devices to full environments and eventually characters.

But modeling is only half the story. What really brings a 3D object to life is the material. And that’s where things get interesting.

Even though you’re working in a 3D space, materials are created in 2D. You unwrap a model like you’re peeling the skin off an object and laying it flat. Then you paint it, metal needs to feel cold, worn, and imperfect. Skin needs variation, subtle color shifts, tiny flaws that make it believable.

I used tools like Photoshop, Affinity Photo 2, and Substance Painter to build those surfaces. You’re not just coloring something. You’re telling a story through texture. Scratches, pores, roughness, reflection. All of it matters.

Over time, you start to realize something important. Realism isn’t about perfection, it’s about convincing the eye.

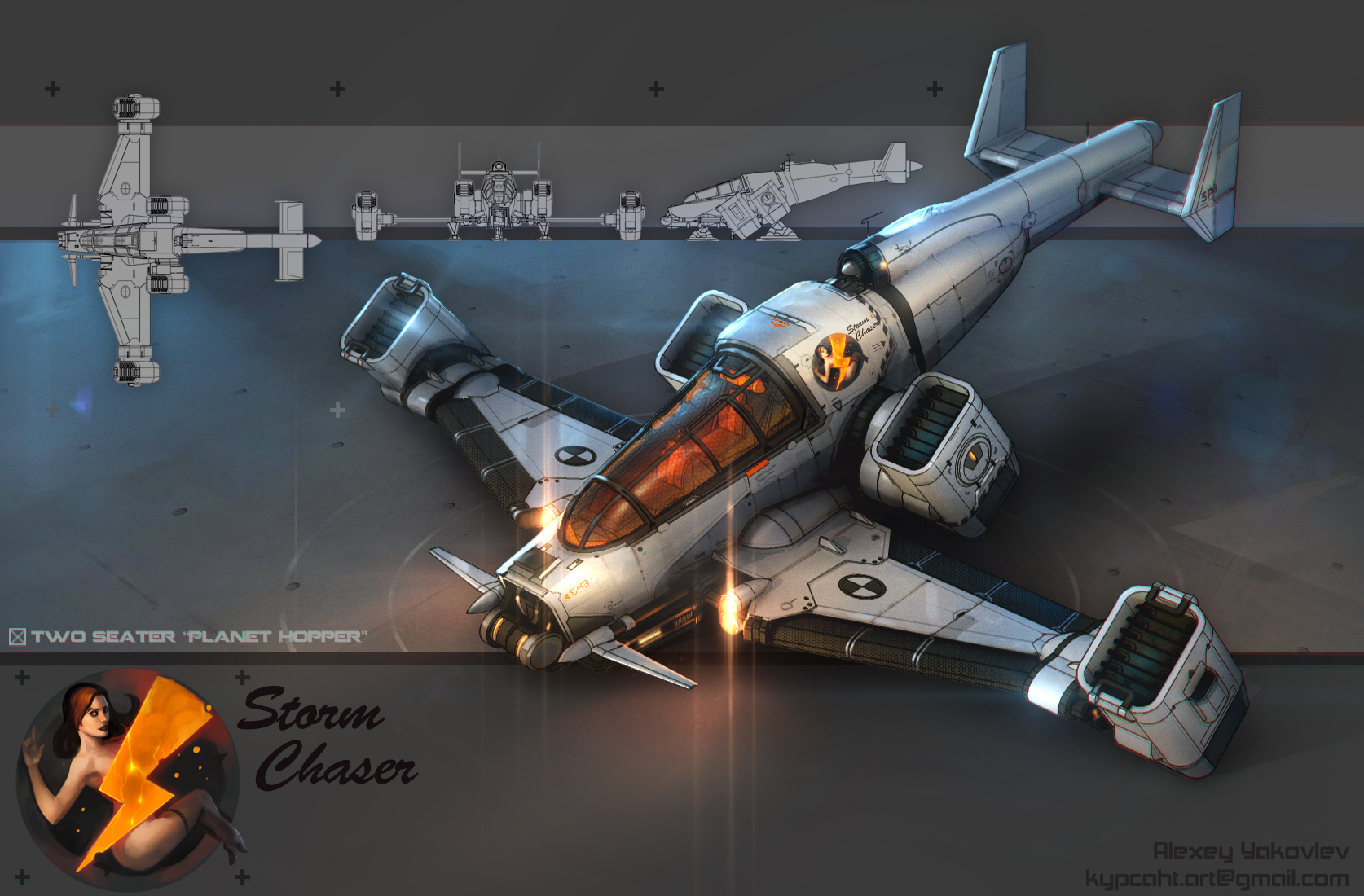

The “Storm Chaser”

Years later, I came across Daz 3D, and that changed my direction in a big way.

Daz focuses heavily on character creation. Faces, proportions, subtle human details. It gave me a way to explore something I had always struggled with.

I’ve always had a clear vision of my characters. I could see them in my mind. I knew how they moved, how they felt, how they carried themselves. But I wasn’t someone who could sit down and sketch them out on paper. So I built a different process.

Before The Amaranth Chronicles: Deviant Rising came out in 2017, I worked with a small team of artists to create concept pieces. I paid for artwork that helped bring these characters into the world visually. At the same time, I started building some of them myself in 3D.

It wasn’t a solo effort. Close friends and talented artists stepped in where my skills had limits. That collaboration mattered. It shaped the world in ways I couldn’t have done alone.

Daz became a bridge for me. It let me take something that lived in my head and begin translating it into something visual, even without traditional drawing skills.

And recently, I started experimenting again.

This time, I took my 3D renders and brought them into ChatGPT. Not to replace what I had made, but to reinterpret it. To see what would happen if I pushed those images a little closer to something that feels like a photograph.

Lithia Boson

On the left is a 3D render of my Lithia Boson that I created using my methods and rendered Daz 3D. This is how she exists through a traditional workflow. Every element you see, from the lighting to the skin tone to the subtle imperfections, was part of a process I’ve refined over years. It’s clean, stylized, and intentionally controlled.

On the right is that same image after being processed through ChatGPT using a photorealistic prompt.

At first glance, the two images feel similar. But the differences start to reveal themselves the longer you look. In the original render, the lighting is precise but slightly uniform. The skin is smooth, almost too perfect. The materials behave correctly, but they still feel like they belong to a digital environment. In the photorealistic version, something shifts.

The skin picks up subtle variation. Tiny imperfections start to appear. Light wraps around the face differently, breaking in places you wouldn’t normally plan for. The reflections feel less “placed” and more like they’re reacting to a real-world environment. Even the softness around the eyes and lips changes how the character feels emotionally. It’s still Lithia. But she feels less like a render and more like a moment captured from a camera. It’s almost like you can see a sole behind her eyes.

That’s what stood out to me. Not that one is better than the other, but that they represent two different interpretations of the same character. One is built through deliberate control. The other introduces a layer of unpredictability that pushes the image closer to something we instinctively recognize as real.

And that’s where this process becomes interesting to me. It’s not about replacing the original work. It’s about taking something that already exists and seeing how far it can be pushed into another visual language without losing its identity.

Cade Path

On the left is my original 3D render of Cade Path. This is a character I’ve spent a long time refining, both visually and conceptually. The lighting, the skin, the materials on the jacket, all of it was intentional. It’s a controlled image. Every highlight is placed. Every shadow is calculated.

On the right is that same render after running it through ChatGPT with a photorealistic prompt. This is where things get interesting.

The original image feels sharp and stylized. The skin is clean. The lighting has that polished, almost synthetic precision that comes from a 3D engine doing exactly what you tell it to do.

But in the photorealistic version, the image loosens. The skin starts to breathe. You begin to see subtle texture variation that wasn’t explicitly authored. The highlights on the face break up in a more natural way. The lighting feels less like it’s been placed and more like it’s reacting. Even the expression shifts slightly and it almost looks like a screenshot of a stylized animated series.

Not because the model changed, but because style adds weight. Cade’s face carries a bit more tension. It’s a small difference, but it changes how you read the character.

And that’s where I think this becomes powerful. It’s not AI versus artists. It’s AI as a new lens.

Nyreen, Paul and Jerula

After seeing this shift with Lithia and Cade, I started testing the same process across other characters in The Amaranth Chronicles. What surprised me wasn’t that it worked. It’s that it was creative consistently.

Across different faces, skin tones, lighting environments, and even completely different emotional expressions, the same pattern kept showing up. The original renders are more “precise”, designed and intentional.

The” photorealistic” versions introduce something harder to control. Subtle asymmetry. Skin variation. Light that doesn’t feel placed, but discovered.

With Nyreen, the change softens the image just enough to make her feel more present. There’s a warmth in her expression that becomes easier to connect to, like you’re not just looking at a character, but at a person mid-conversation.

With Paul, the shift is more grounded. The structure of his face holds up almost identically, but ChatGPT attempt at “realism” adds weight. He feels less like a model and more like someone standing in a real space, under real light.

And with Jerula, the effect is almost cinematic. The lighting already had a strong mood in the original render, but ChatGPT’s attempt to make it “photorealistic” version pushes it in an interesting direction. The skin reacts more naturally. The highlights feel less controlled and more environmental. It leans into something closer to a filmed moment than a rendered one.

None of these replace the original work. And more importantly, they stay true to the identity of each character. That’s what made this feel less like a trick, and more like a tool.

————— Update —————

Concept Art to Photographic Images

On the left is Lithia concept art. On the right is Lithia photoreal interpretation.

The original image isn’t a render. It’s an illustration. A moment designed by hand, where every line, every highlight, every decision is intentional. It captures the idea of Lithia. Her posture, her focus, the way she interacts with the world around her. But it’s still an interpretation.

When I took that same character and pushed her through a 3D and photoreal process, something changed. The image stopped feeling authored and started feeling observed.

The lighting no longer behaves like something placed. It behaves like something happening. The skin carries subtle variation that wasn’t explicitly painted. The environment feels less like a backdrop and more like a space and Lithia herself shifts. Not in who she is, but in how she exists. In the photoreal image, it feels like you’ve stepped into the room with her. Like the moment isn’t being shown. It’s being witnessed.

On the left is a concept illustration from aboard the Freedom’s Reach. It’s expressive, slightly exaggerated, and designed to communicate the moment clearly. You immediately understand the dynamic between the characters. The humor reads fast. The poses are intentional. It’s a scene that’s been staged for you.

On the right is that same moment, reinterpreted through a photoreal process. The structure is still there. The characters haven’t changed. But the feeling does.

The expressions become more subtle. The humor doesn’t jump out the same way. Instead, it settles into something quieter, more natural. It feels less like a scene being presented and more like a moment you’ve stepped into mid-conversation.

Even the body language shifts slightly. In the concept version, each character is clearly signaling how you should read them. In the photoreal version, those signals soften. You have to read them the way you would in real life, through small cues, posture, eye direction, tension.

It becomes less about being told what’s happening and more about recognizing it. That’s where the difference really lives.

The difference becomes even more pronounced when the scene carries weight.

On the left is a concept illustration from the bridge. It’s structured, readable, and deliberate. Every character is positioned in a way that clearly communicates their role. The captain’s gesture anchors the entire composition. You immediately understand who is in control and what kind of moment this is. It’s clean. Focused. Designed to be read quickly.

On the right is that same scene, translated into a photoreal interpretation. The structure remains. The composition is nearly identical. But the feeling shifts.

The lighting becomes heavier. It no longer just highlights the characters, it defines the space. The materials carry weight. The walls feel industrial. The air feels occupied. Even the subtle reflections and imperfections begin to suggest a lived-in environment and the characters change with it.

The captain’s gesture is still the focal point, but it lands differently. It doesn’t feel like a symbol of authority. It feels like a decision being made in real time. The kind of moment where everyone in the room understands the stakes without needing it explained.

The rest of the crew follows that shift. Their expressions become quieter, but more grounded. Less exaggerated, more believable. You’re no longer being shown a scene. You’re sitting inside it.

● ● ●

The results were interesting. Not perfect, sometimes a little off, but enough to change how I think about the process. It felt like discovering a step I didn’t know existed.

There’s a lot of conversation right now about AI replacing artists and I understand that fear. Creative work is personal. It’s time, identity, and years of trial and error wrapped into something you can finally show people. When something new enters that space, it can feel like it’s trying to take your place. But that’s not what this felt like to me.

AI didn’t create these characters. It didn’t design them, model them, or understand who they are. That came from years of work. From building them piece by piece. From getting things wrong and figuring out why they didn’t work.

What AI does is something different. It takes something that already exists and translates it. It pushes it into another visual language. Sometimes it enhances realism. Sometimes it introduces subtle changes you wouldn’t have thought to make. Sometimes it misses completely. But when it works, it shows you a version of your own work you hadn’t seen yet. That’s not replacement, that’s perspective.

If anything, it feels like adding a new member to the team. One that moves fast. One that doesn’t always understand the assignment. But every once in a while, it hands you something unexpected and you stop and think, “Wait… there’s something there.”

And that’s where it becomes exciting, because the craft doesn’t disappear. It expands.